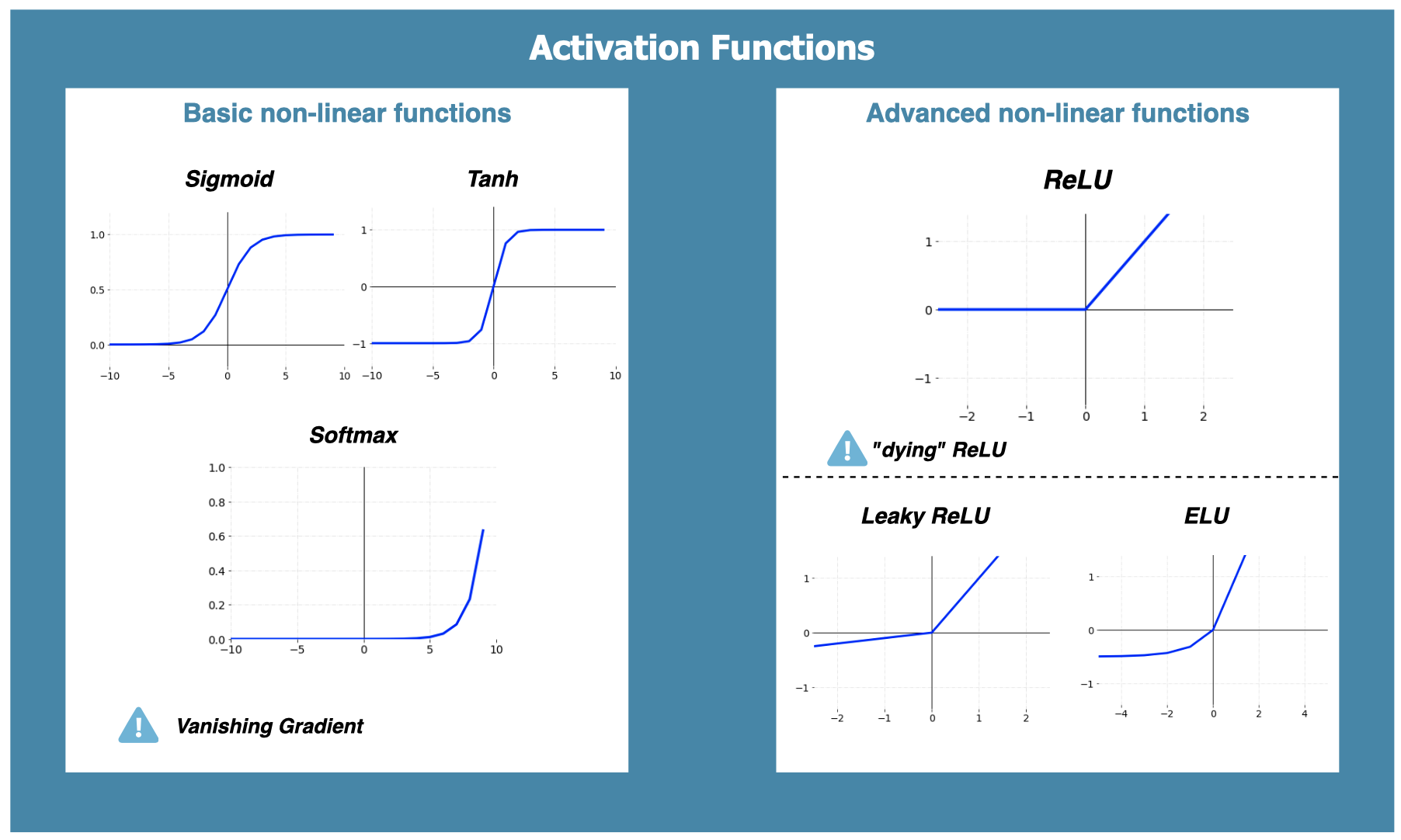

Damien Benveniste, PhD on LinkedIn: #machinelearning #datascience #artificialintelligence | 78 comments

Deep Learning 101: Transformer Activation Functions Explainer - Sigmoid, ReLU, GELU, Swish — Salt Data Labs

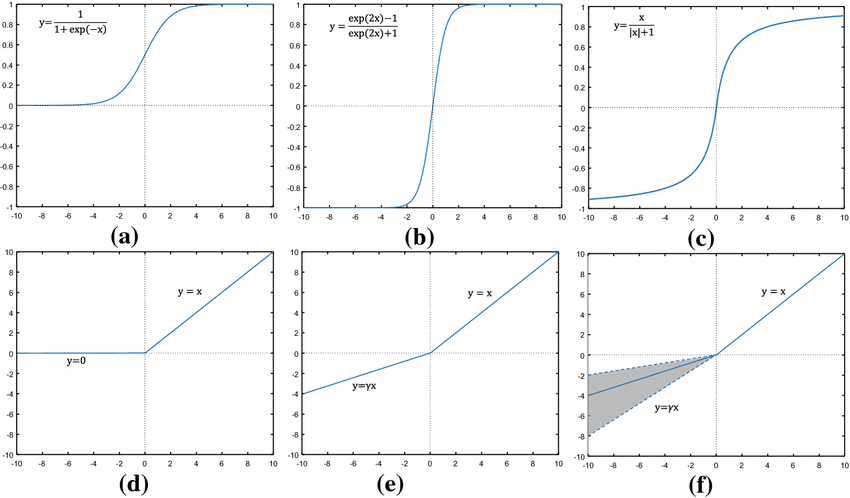

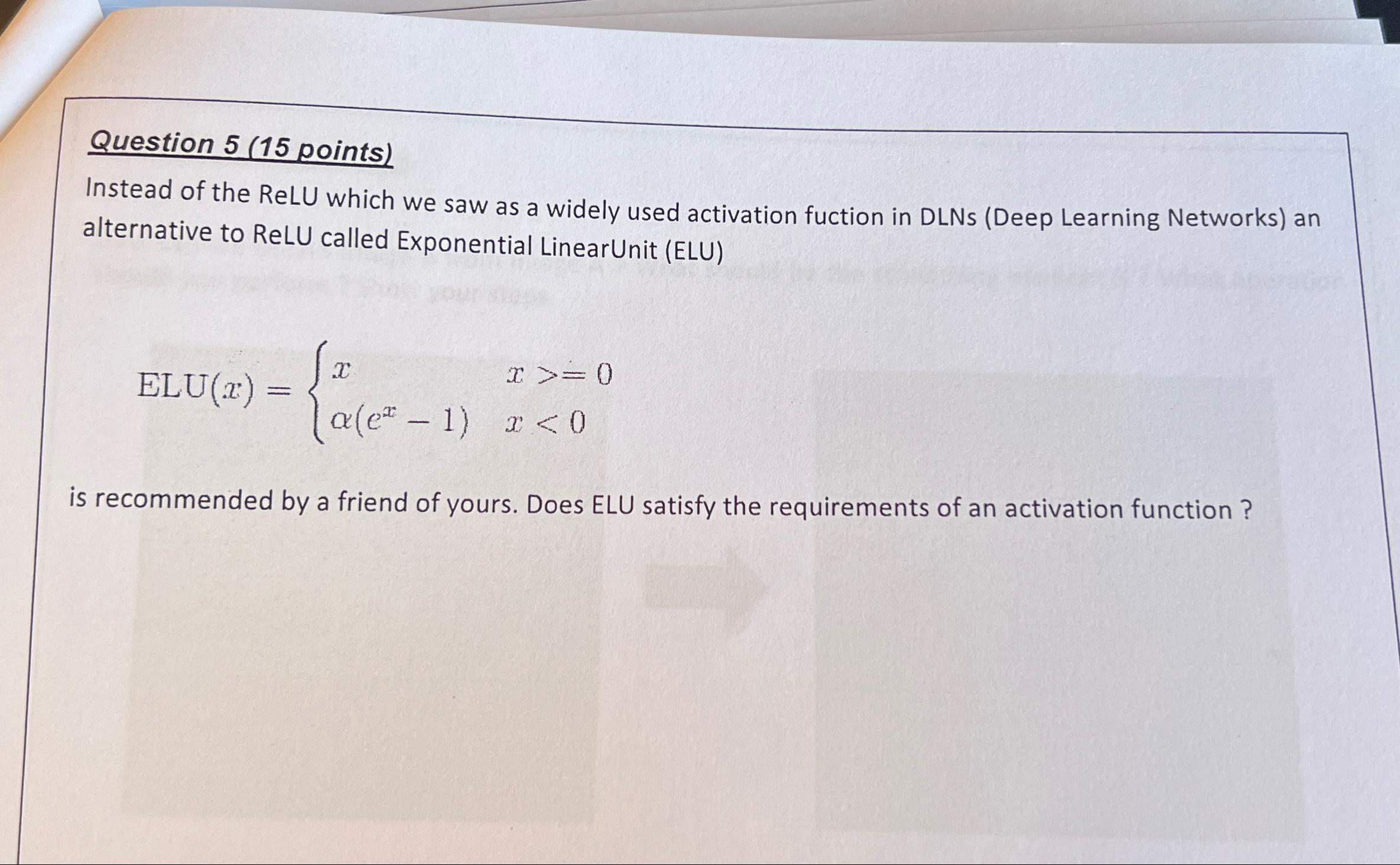

ReLU Alternative: Understanding and Using the Delta Function | by Cebrail Kutlar | Feb, 2024 | Medium

![Different Activation Functions. a ReLU and Leaky ReLU [37], b Sigmoid... | Download Scientific Diagram Different Activation Functions. a ReLU and Leaky ReLU [37], b Sigmoid... | Download Scientific Diagram](https://www.researchgate.net/publication/339905203/figure/fig3/AS:868603377225728@1584102591508/Different-Activation-Functions-a-ReLU-and-Leaky-ReLU-37-b-Sigmoid-Activation-Function.png)

Different Activation Functions. a ReLU and Leaky ReLU [37], b Sigmoid... | Download Scientific Diagram

Are there any alternatives to activation functions to add non-linearity to a CNN? - ABC of DataScience and ML - Quora

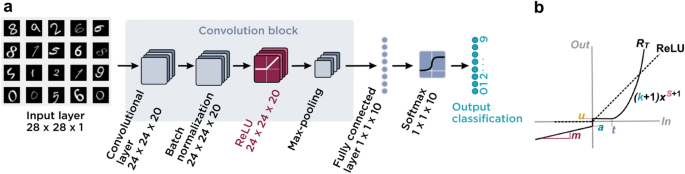

Multimodal transistors as ReLU activation functions in physical neural network classifiers | Scientific Reports

Graph of activation functions of sigmoid (left), tanh (center) and ReLU... | Download Scientific Diagram

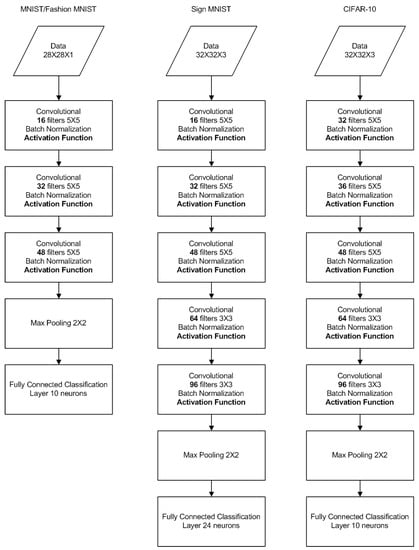

Information | Free Full-Text | Learnable Leaky ReLU (LeLeLU): An Alternative Accuracy-Optimized Activation Function

Information | Free Full-Text | Learnable Leaky ReLU (LeLeLU): An Alternative Accuracy-Optimized Activation Function

![Activation Functions in Neural Networks [12 Types & Use Cases] Activation Functions in Neural Networks [12 Types & Use Cases]](https://assets-global.website-files.com/5d7b77b063a9066d83e1209c/627d12431fbd5e61913b7423_60be4975a399c635d06ea853_hero_image_activation_func_dark.png)

![Activation functions in neural networks [Updated 2024] | SuperAnnotate Activation functions in neural networks [Updated 2024] | SuperAnnotate](https://assets-global.website-files.com/614c82ed388d53640613982e/64a6c2b152d0d53ed368032f_gelu%20vs%20relu%20vs%20elu.webp)

![Activation Functions in Neural Networks [12 Types & Use Cases] Activation Functions in Neural Networks [12 Types & Use Cases]](https://assets-global.website-files.com/5d7b77b063a9066d83e1209c/60d248619e91e2238803bb97_pasted%20image%200%20(11).jpg)

![Activation functions in neural networks [Updated 2024] | SuperAnnotate Activation functions in neural networks [Updated 2024] | SuperAnnotate](https://assets-global.website-files.com/614c82ed388d53640613982e/64a6c2213bb39f58495396c9_leaky%20relu%20function.webp)